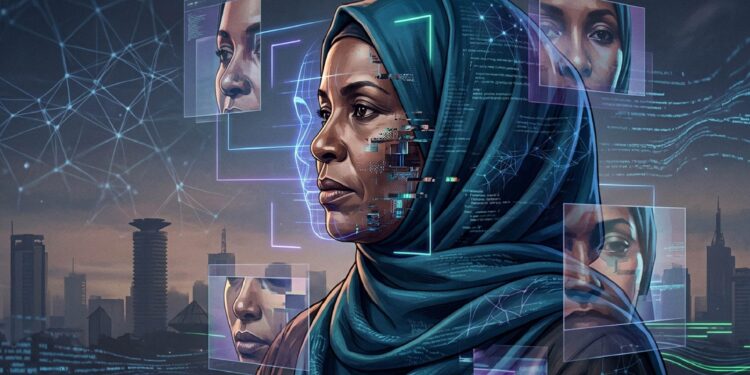

I uploaded It started as a simple experiment.

a portrait of myself, professionally dressed and wearing a hijab, into Nano Banana, an artificial intelligence image generator. Then I typed a short instruction: “Remove the hijab.”

Within seconds, the system produced a new version of the photograph. The headscarf was gone. In its place was styled hair. The neckline had shifted slightly, revealing part of the chest—something that had never appeared in my original photo.

No warning, hesitation, or recognition, removing the hijab violates a deeply held religious practice. I tested the same prompt on ChatGPT, another widely used generative AI system. The results were the same.

What looked like a simple edit revealed a bigger problem. These systems don’t understand culture or meaning. This bias creates risks for Muslim women, whose images can be altered without their consent and used against them.

Hanifa Adan, a human rights defender and journalist, remembers the moment she saw an image of herself that she had never consented to. She appeared in a bikini, a garment she would never choose on a body that was hers, yet no longer hers. It violated her identity, dignity, and faith.

“I just cried. I felt confused at first, then sick,” she recalls. “It felt like someone had stolen something from me, my image, my identity. I realized that anyone could see it and believe it, especially people I know.”

Hanifa experienced this again in 2024, when another image of a woman in a blue dress – digitally altered to appear transparent circulated online. As it went viral, people identified the woman in the image as Hanifa. They sexualized her, judged her, and treated the image as real.

By the time Hanifa clarified that the image was fake, the damage had already been done, leaving behind misinformation, humiliation, and public judgment.

“The bikini was the worst one, not even because it was sexual, but because of what it means. As a Muslim woman, that’s something that can affect how people see you. I kept thinking about my family seeing it before I even had a chance to explain, and that fear sat in my chest for days,” she says.

Hanifa’s experience represents a broader pattern in which women are targets of AI-generated image abuse. A 2019 report by DeepTrace found that 96% of deepfake content online was non-consensual sexual material, with 99% of victims being women. By 2023, nearly 96,000 deepfake videos had been recorded online, a sharp increase from previous years.

More recent investigations show that manipulated images of Muslim women are being widely generated and shared by prompting AI tools to remove hijabs or alter clothing. A WIRED investigation found AI users generating non-consensual images that alter women’s clothing, including hijabs, saris, and other cultural or religious dress. In a review of 500 AI-generated images, about 5% involved manipulation of cultural or religious identity.

This points to a growing pattern of using AI to sexualize women and distort cultural expression. With just a photo and a prompt, AI tools can produce images that look real but are entirely fabricated. They are designed to follow instructions, not to consider consequences. That gap—between what the system can do and what it should do- reveals a flaw in how these systems are built.

When an image is changed, it is not just the appearance that shifts. It is how a person is seen and judged. Identity is reshaped without consent, says Kevin Ndegwa, a motion designer and filmmaker at Africa Uncensored.

“AI tools currently lack a nuanced understanding of cultural and religious sensitivity,” says Ndegwa.

“When prompts involve removing a hijab, the system treats it as simple visual editing, rather than a significant cultural act,” he adds.

Ndegwa says that even without explicit prompts, some AI tools struggle to preserve cultural identity. When users request changes, such as removing a hijab, those edits are often carried out without any built-in safeguards.

Through his project Echoes of a Silent Protester, Ndegwa examines how AI reshapes the representation of Muslim women, particularly those who wear visible markers of faith such as the hijab.

His findings show that AI interprets images as patterns, shapes, and probabilities—not as identity or belief. A hijab is not recognized as a religious symbol. It is treated as a visual element that can be removed or altered.

Ndegwa explains that this gap between technical function and cultural meaning is where harm emerges. Because many AI models are trained on datasets that reflect dominant Western visual norms, they can normalize certain appearances while treating cultural or religious markers as optional rather than protected identity elements. For Muslim women, this creates a specific vulnerability because identity markers are consistently misread by systems that lack cultural context. In doing so, the AI tools do more than edit images; they reshape how identities are visually represented, often without awareness or consent.

Removing the hijab violates religious identity and carries serious social consequences. An image without a hijab is considered private. When such an image is generated and shared without consent, that boundary is broken, and it can damage reputations, strain family relationships, and expose women to harassment and judgement.

Sheikh Jafaar Badru explains that in Islam, how a person is represented is deeply tied to faith and consent.

“The Qur’an asks Muslims, men and women, to cover their nakedness. Exposing what should not be exposed or filters that alter or disfigure someone’s features are considered sinful. Doing this to another person without their consent would be considered sinful and deserving of condemnation,” he says.

To reduce their risks of image-based abuse, some women limit their online presence or withdraw from digital spaces altogether.

“I try to be careful about what I post,” says Leila Ahmed. “Even a single photo can be twisted into something that isn’t true.”

Yet not all interactions with these tools are intended to harm.

“There are definitely cases where AI is used intentionally to harass or target women, such as creating altered images to shame or discredit them. However, a significant portion of harm also comes from casual or uninformed use. People experiment with these tools without fully understanding the implications, which makes education and awareness just as important as regulation. And the most important point is that AI image manipulation doesn’t exist in a vacuum. It amplifies existing social inequalities,” says Kevin Ndegwa.

Fatuma Musa, a young Muslim woman in Mombasa, uses AI image generators privately. She creates images of herself without a hijab—not to share, but out of curiosity.

“Sometimes I make images without a hijab and sometimes in clothes I wouldn’t normally wear,” she says. “I don’t post them anywhere. They’re just for me. It’s fun to see myself in a way I usually don’t.”

But even such private use may not be as contained as it feels, because AI systems do not just generate images; they learn from them.

Experts warn that this data can be used to refine how accurately these tools replicate human faces and bodies. The more images a system processes, the more realistic and potentially invasive its outputs become. This is part of what makes AI-generated images so convincing. They are not random creations; they are reflections built from accumulated data. Every upload and prompt feeds into a system that recognises patterns in faces and behaviour.

“What feels private is often not private. Once images are uploaded or accessed through apps, AI can analyse your images over time and build a profile of who you are. When apps are given access to your gallery, they can process your images and extract information even without you directly sharing them,” explains Mwende Mukwanyaga, an AI ethics expert.

Despite the scale of the problem, regulation is struggling to keep pace, but some governments are beginning to act. The European Union now requires transparency in AI-generated content, while the United Kingdom has criminalized non-consensual deepfake pornography. In January 2026, Indonesia temporarily blocked access to Grok over concerns about sexually explicit and non-consensual manipulated images.

In Kenya, though the Kenya Artificial Intelligence Strategy 2025–2030 calls for ethical governance and responsible innovation, legal protections remain limited. The proposed AI Bill does not directly address image-based abuse, and existing laws—such as the Computer Misuse and Cybercrimes Act—were introduced before these technologies became widespread.

“For policymakers, there needs to be clearer regulations when using AI in image manipulation. Developers should invest in more diverse training datasets and integrate ethical checkpoints and tools that flag or restrict culturally sensitive edits into their systems. Social media platforms should improve detection and labelling of AI-manipulated content and provide users with more control over how their images are used or altered,” says Ndegwa.

Susan Mute, an advocate of the High Court, argues in a LinkedIn post that existing laws already apply, and such cases fall under offences such as cyberbullying and cyberstalking.

“When artificially generated images are used to harass or humiliate someone, the harm is not theoretical; it is deliberate and unlawful,” writes Mute.

Even where laws exist, enforcement remains difficult, and harmful content continues to circulate faster than it can be contained. For the affected women, the gap between harm and accountability is deeply felt.

“We need real safeguards. There should be limits on what these tools can generate, especially when it involves real people. There should be laws that actually recognize this as harm, because that’s what it is. And there should be consequences,” says Hanifa.

“Right now, people do this because they know they’ll get away with it,” she adds.

She also points to a cultural problem: the tendency to treat such content as entertainment.

“People need to stop treating this like a joke. Because for the person it’s happening to, it’s not funny at all,” says Hanifa.

But even as legal frameworks are debated, a deeper issue remains. AI tools reflect the people who build them and the data they are trained on. When a system can remove a hijab without hesitation, it is not simply editing an image. It is revealing what it does not recognise and who it fails to protect. For many women, the damage is public and irreversible.

This article was produced as part of the Gender+AI Reporting Fellowship, with support from the Africa Women’s Journalism Project (AWJP) in partnership with DW Akademie. The journalist used AI tools as research aids to review and summarise relevant policy and research documents and extract key statistics. All analysis, editorial decisions and final wording were done by the reporter, in line with IWOMANTODAY’s editorial standards.